|

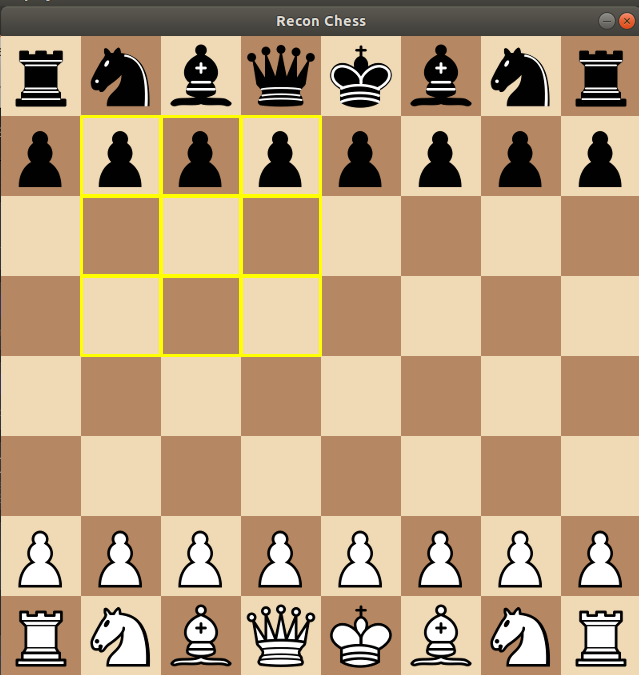

Last summer I saw a posting about a competition called Reconnaissance Blind Chess. I have always been really fascinated by games so I decided to throw my hat in the ring. A few weeks ago, with the competition all done, I was invited to NeurIPS by the JHUAPL to talk about how my bot worked. I figured that it might be a bit interesting to other people then, to hear how my bot worked. First I should talk about what the game was. Reconnaissance Blind Chess is an imperfect information version of chess with a slight twist from other common imperfect information games. An imperfect information game is any game in which you don't know the full state of the board. The most common example is Poker. You know your hand, and what is on the field, but not the other players' hands.

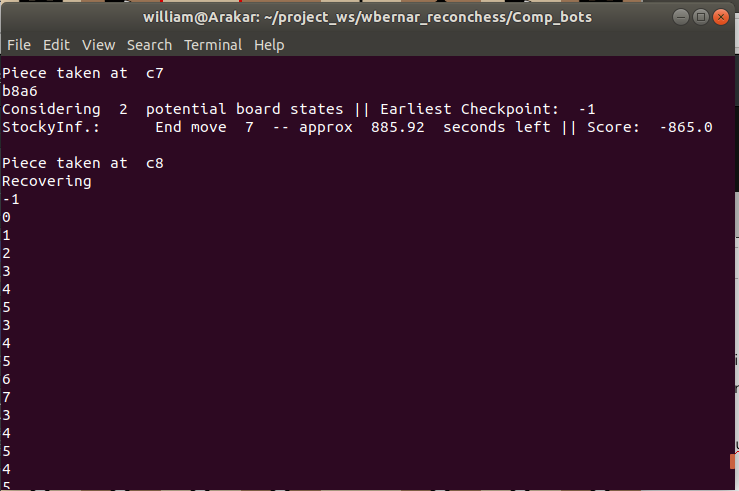

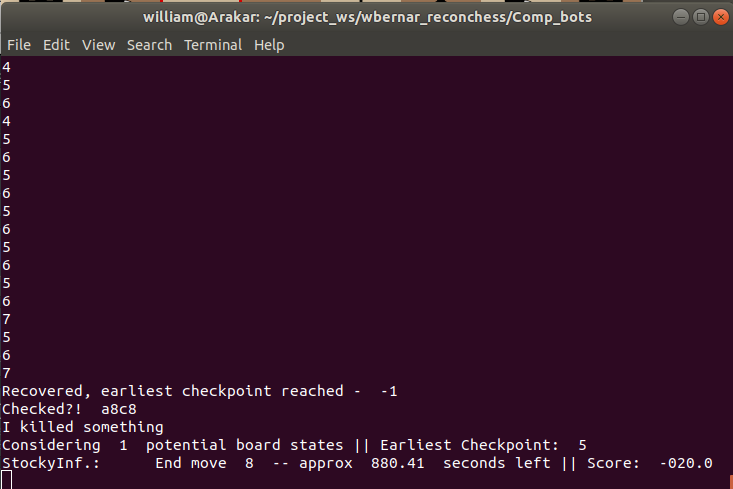

The big issue with this game is that each turn there is O(20) moves available, which means that if your scan is not a "good" scan (i.e. revealing what specific move was taken), the amount of potential boards grows by a factor of around 20. In the tournament you only had a total of 15 minutes to make all of your moves. This, combined with the exponential growth of the potential boards, meant that whatever you did had to be quite lean and efficient if you were to take into account the information across all potential boards. So what would a bot to play this game look like? There are essentially two tasks at play:

My bot was actually pretty naive for both of those. Scanning There could be quite a few potential boards, and my main goal was to minimize the number. To do this I needed a lean O(n) algorithm, as since the number of potential boards could quickly reach the thousands or millions we couldn't afford to ponder for long at this stage. The jist of what I did was as follows:

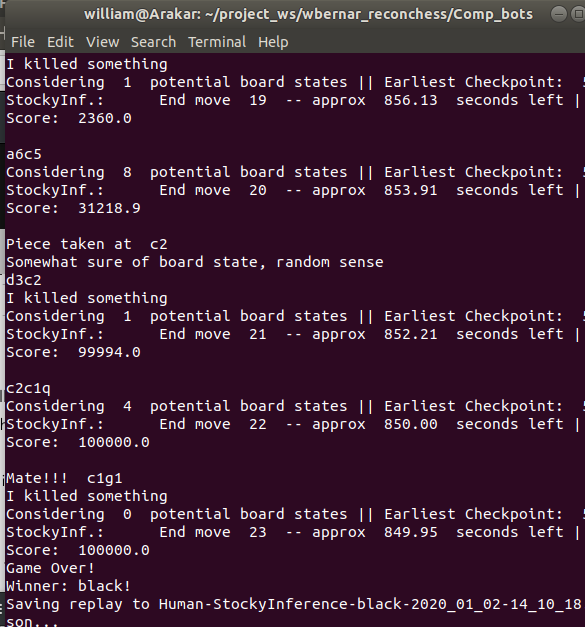

Moving I'm not good at chess. In my family I am by far the worst chess player. Chess is a highly studied game, and there are people, particularly programmers, who are really amazing at chess. I did not have the time or knowledge to make a chess engine that could compete with what was out there. So despite RBC not really being chess, I (and as I found out at NeurIPS, almost all of the competitors) used a chess engine to drive the movement policy. The jist of what I did was the following:

There was some additional jerry-rigged heuristics that were put into play as a result of using a chess engine. In chess you never actually capture the king, in RBC to win you do. So if there was an opportunity to capture a king then the bot would take it. If there wasn't an opportunity to capture the king, and one of the potential boards was in check, then we would prioritize moves that would keep the potential boards out of check. But both of those policies are O(n) with regards to the number of potential states, which grows exponentially. The computer I was running my bot on wasn't the strongest computer in the world. Even with those policies as trimmed and fast as I could get them, there were many situations where a single turn would cause my bot to time out because there were just way too many potential boards to compute. In addition there seemed to be a "critical point" of boards, if the number got too big then the amount of information that a single scan could give would be far less than the amount of new boards generated each turn. So my solution: Uniform Random Sampling Seriously. Why Uniform Sampling? Why not weigh based off of the scoring of the boards? It turned out that similar boards tended to have pretty similar scores, so if we weighed the chance of a board being selected either positively or negatively with its score, then we would end up with a sample of boards that looked very similar. The issue with the boards all looking very similar is that scans give far less information the more similar boards looked. By uniformly sampling we get a selection of boards that often looked more distinct, which let us discriminate between them far easier with scans. How did it perform? Pretty well! I got 1st place in the two test-tournaments (although many bots were not fully developed by this point), and 5th place in the final tournament. The results, and replays of the games, can be found here. All in all, for my first real outing in a game-tournament I'm pretty happy with my bot's performance.

I haven't cleaned it up, and it shows quite a bit. But for the curious who are willing to delve into the jerry-rigged code that evolved out of half a year of working on this, the full code for my bot is below:

2 Comments

Giovanni

4/15/2022 09:49:09 am

Really intresting, thanks to share your experience, I have a question : how is possible to use Stockfish if you don't have all the information about your enemy pieces, for example if you don't know where the queen is Stockfish is going to calculate your move in a way as you are definitely winning, but actually you are not.

Reply

Leave a Reply. |

AuthorHi, I'm Billy, a PhD student studying Math. Archives

January 2020

Categories

All

|

RSS Feed

RSS Feed